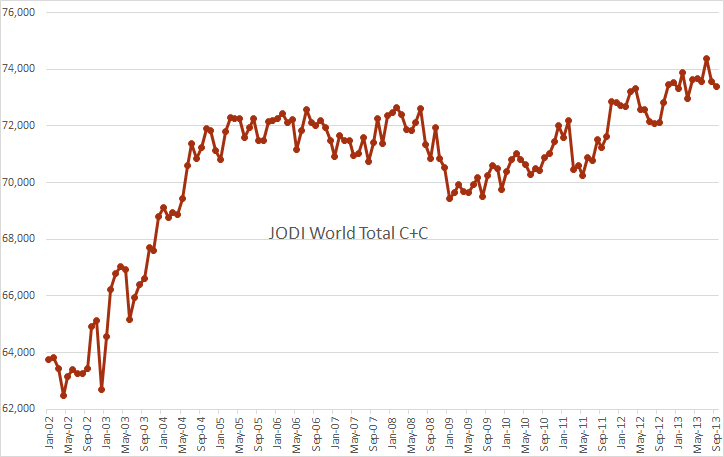

The JODI data came out a few days ago. Below is JODI World Total C+C with EIA data used for countries not reporting to JODI. I use EIA data also for Venezuela and Iran because JODI uses data reported by these two countries which is political and inflated by about one million barrels per day by Iran and half a million barrels a day by Venezuela.

The data is in kb/d with the last data point September 2013.

Notice that JODI has a new world high in July just like the EIA had but down 976,000 barrels per day from July to to September.

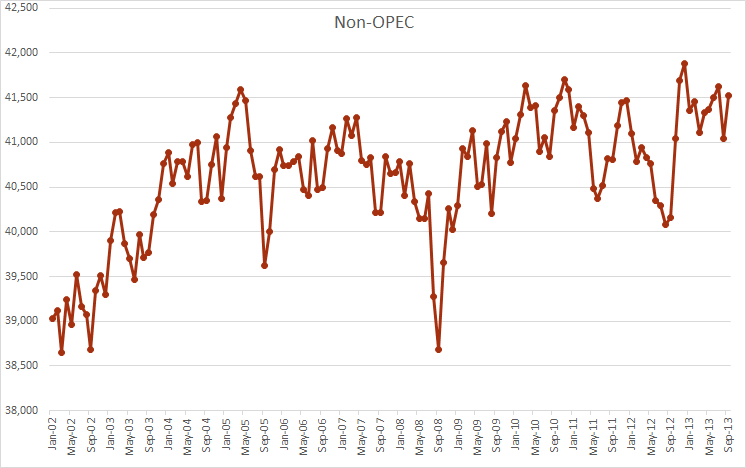

JODI has Non-OPEC at about 350,000 barrels below the peak in December 2012.

I don’t put much stock in the JODI data but I do find it interesting look at occasionally. And since it is usually almost two months ahead of the EIA data it does give me some idea of where production will be two months ahead of the EIA report.

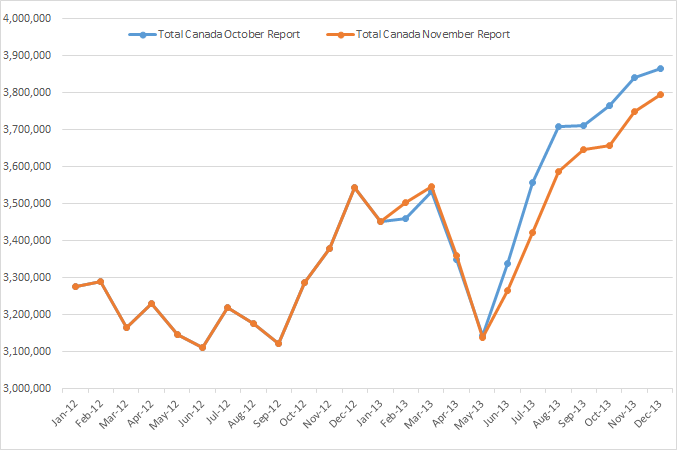

Canada’s National Energy Board came out with Canada’s production of “Crude Oil and Equivalent” about a week ago. The data is in cubic meters per day so it must be converted to barrels per day. You may remember I posted a chart of their data about a month ago. Well they have lowered their expectations somewhat.

The data after July is where they expect production to be. Apparently they are a little behind on their data gathering. And as you can see they have lowered their expectations somewhat since their report in October.

But now I must tell you about this, Peak Oil in the IEA’s World Energy Outlook 2013. It appears to be the IEA’s official position on peak oil and the effect LTO, (Light Tight Oil or Shale Oil), will have on the peak. The text is from pages 447 and 448 of the report.

Spoiler alert: They are also peak oilers, but the peak, they believe, will be after 2035.

Has LTO resolved the debate about peak oil?

It has become fashionable to state that the shale gas and LTO revolutions in the United States have made the peak oil theory obsolete. Our point of view is that the basic arguments have not changed significantly. To understand why, it is useful to revisit the main peak oil argument, which is based on the observation that, for a given basin or country, the amount of oil found and the amount produced tend to follow a rising, peaking and then declining curve over time – known as a “Hubbert” curve. This is either because big fields tend to be found and produced first, followed by smaller fields as the basin matures, or because the cheapest fields are produced first and, as depletion sets in, costs increase (because of smaller, more complex fields) and the basin is outcompeted by other regions. This phenomenon has been observed in many countries (Laherrere, 2003). Where technology opens up a new set of resources that were not previously accessible (as with deepwater or LTO), there can be multiple Hubbert peaks, as each type of resource moves up and then down the curve.

The crux of the peak oil argument has been the assumption that these dynamics, which are well established empirically at the basin or country level, will also take place at the world level (an assumption that has not been vindicated by empirical facts so far). For the purposes of the peak oil argument, the advent of LTO (or other technology breakthroughs) may shift the overall peak in time, but it does not change the conclusion: once the peak is reached, decline inevitably follows rather quickly (and, given the amount of LTO resources compared to the total resources, it could be argued that the peak would be shifted by only a few years in any case).

It is this last assumption – that it is possible to transpose observe country or basin-level dynamics to the world level – that is open to serious doubt. In all the countries that have seen oil production peak, oil demand has continued to increase. This demand has been satisfied, where necessary, by imports from regions that were still pre-peak and therefore lower cost. At the world level since there is no possibility to import, demand has to be equal to supply. If supply is limited, price will rise, reducing demand (and increasing supply). This price mechanism is expected to lead to a long plateau, or slow decline, rather than the rapid decline observed on a country-by-country basis.

With the acceptance that demand is as important as geology and price is determining worldwide supply, it becomes clear that other factors can play a crucial role. One that has been emphasised in successive Outlooks is the role of government politics. Whether driven by the desire to tackle climate change, or simply to encourage efficient uses of resources, government politics have a large effect on future oil demand. This is illustrated by the policy-driven differences between the scenarios; where we see oil production peaks (as in the 450 Scenario) it is not because oil is becoming more difficult and more expensive to produce, but because demand decreases as a result of policy choices.

Taking into account the large amount of unconventional resources that becomes available as oil prices increase, in addition to the significant remaining conventional resources and the sizable potential for EOR in conventional fields, no peak occurs before the end of the projection period. (In peak language, the URR value that enters into the Hubbert equation is large enough to delay the peak until after 2035). This was already the case before LTO. It has not changed much with the arrival of LTO.

So that’s it, no peak until after 2035. I’m betting that date will be revised… and soon.

After spending a lifetime observing supposedly independent agencies of government making predictions, it seems perfectly clear to me that the people running such agencies are seldom ever willing to make any prediction for public consumption that might upset the apple cart of business as usual.

These people are after all bureaucrats and subject to being fired for irritating the people who are depending on favorable reports to help them get re- elected; or if protected from firing by civil service rules, being transferred to some place very unpleasant to clean the toilets.

The most you can expect from them is that there will be hints between the lines if you are willing to look for them.

In this case the hint is to look into the “export land” model, and think about per capita supplies, rather than total supplies- the per capita figure is the more crucial one by far.

I for one expect the long term oil price trend to continue to be up , on average, every year, for the fore see able future, and the peak of production world wide to occur within the next few years.

I was considerably surprised that the world economy proved to be resilient enough to adapt as well as it has to hundred dollar oil.

Given the way government debt is exploding all over, I wonder if this resilience is real, or temporary and dependent on deficit financing.

The EIA has been lying for years. Why should they change? It’s like the lies that emanate about the key economic statistics from the US gov’t.: GDP and inflation. People who buy food, gas, insurance know the gov’t lies. Shadowstats.com documents the multitude of lies!

Read a comment today at Technology Review about Japan Drilling for Methan Hydrates. Lot’s of happy talk once again and only little talks about costs at the end, that are handwaved.

“The crux of the peak oil argument has been the assumption that these dynamics, which are well established empirically at the basin or country level, will also take place at the world level (an assumption that has not been vindicated by empirical facts so far).”

What kind of thinking allows someone to assume that what will happen in the world as a whole will be any different from what has happened and is happening at the basin or country level? I’m sure that if everyone keeps sprinkling that pixie dust the magic will continue forever and ever. And even if 2035 were the date for global peak oil, how does that make things any better for the future of our oil based civilization.

Not to mention that since TheOilDrum closed down I’ve been spending more time at RealClimate.org… things are not looking all that good if we continue on our current path!

Cheers!

Fred

“. . . it is not because oil is becoming more difficult and more expensive to produce, but because demand decreases”

“Demand” has 2 parts, “desire” and ability to pay,” to say demand decreases implies that it is desire that will fall when in fact it is the ability to pay that falls.

LOL, using such implied logic one might say “The reason I don’t cover my house in gold leaf isn’t because gold is limited and expensive but because I just don’t feel like it.”

The entire point of discussing PO is that higher prices from higher fruit will reduce my ability to pay and affect my personal economy, why else would I care?

After a long enough time, even a government agency protected by the corporate mainstream media has to make some sort of token effort towards telling the truth, because even a the party type or the most dyed in the wool liberal sooner or later notices the truth which can be hidden only so long.

The EIA has at least gotten around to admitting there might actually be such a thing as peak oil, although it does still insist it is too far off to matter. That’s progress of a sort, and about the best we can hope for , for now, as I see it.

The people there have no actual desire to be seen as professional fools a few years down the road, although they are obviously willing too pay that price in order to collect their salaries and bennies; and given the culture they come from- business schools and politics, etc, for the most part, just about all of them probably actually believe that ways will be found to keep on increasing oil production, more or less forever.

You can find plenty of true believers outside of churches. Economists and their ilk are among the worst of the lot .

The professional geologists and other hard science people employed by such agencies have to speak thru their political bosses , and the best they can do is insert a few “read between the lines” warning flags here and there.

Of course here and there you find one with the personal and professional backbone to just quit playing the happy face game and seek other employment, but there are damned few open job slots for bringers of bad news.

“even a the party type or the most dyed in the wool liberal sooner or later notices the truth which can be hidden only so long.

Mac, was that a typo? Shouldn’t such a remark be directed at conservatives, not liberals? After all right wing conservatives are the most ardent deniers of climate change and peak oil. Liberals are far more likely to accept peak oil and climate change as fact. Especially those dyed in the wool liberals like me. 😉

I’m working on updating my Export Capacity Index (ECI, ratio of production to consumption) paper to incorporate 2012 annual data, and I’m working on simplifying the presentation. A summary of two graphs follows.

Normalized Production, ECI Ratio, Annual Net Oil Exports and Remaining Post-1995 CNE (Cumulative Net Exports) by year (1995 values = 100%), for 1995 to 2002 For the Combined Production and Consumption Numbers for the Six Country* Case History:

2002 Values:

Production: 93%

ECI Ratio: 83

Net Exports: 65

Remaining CNE: 16**

*Six major net oil exporting countries that have hit or approached zero net oil exports since 1980, excluding China: Indonesia, UK, Egypt, Vietnam, Argentina, Malaysia (also known as IUKEVAM).

**Estimated Remaining Post-1995 CNE, at the end of 2002 was 31%, based on extrapolating 1995 to 2002 rate of decline in the ECI ratio

Normalized Production, ECI Ratio, Annual Net Oil Exports and Remaining Post-2005 CNE by year (2005 values = 100%), for 2005 to 2012 For the Combined Production and Consumption Numbers for the Top 33 Net Oil Exporters in 2005 (Global Net Exports, or GNE):

2012 Values:

Production: 102%

ECI Ratio: 87

Net Exports: 96

Est. Remaining CNE: 79

We’ve got plenty of climate scientists who seem to be making an ever-louder din about climate change and what may happen if nothing really gets done about it (in time), etc.. So maybe, just maybe, if their calls of urgency are finally followed up on– whenever than is– the “rest of the FF’s will be left in the ground” and all those nice graphs will take a nose-dive… Well, one can hope…

You are dreaming of course. Nothing will ever be done about climate change. Those graphs will nosedive after production starts to fall because of depletion and not one day before.

I have said it about a thousand times but once more: People, or at least the vast majority of people, do not hear arguments of coming disaster and act. They always wait until the disaster happens then react. There will always be people who argue the opposite side of the debate. These people will be regarded as experts by the average person because they are telling them what they want to her. To quote Francis Bacon: People desire to believe what they desire to be true.

Correction: Demand is desire. There is no ability to pay in “demand”. The ability to pay issue becomes relevant for consumption. Consumption is the combination of demand and ability to pay.

That is, Demand and Consumption are not the same thing. Economics would roar in outrage at this, but unless you’re willing to mine Titan, it’s true.

Watcher, I’ve got to go with Pops on this one. Demand is not desire. I desire a beautiful 21 year old woman in my bed tonight but I cannot demand any such thing. And yes, demand is what you have money to pay for and you demand delivery of that product in exchange for payment. If you don’t pay up then you have no right to demand. Or at least your demand will be ignored and therefore amount to nothing.

There is the law of supply and demand in which price is the arbitrator. If you do not have the money to pay then you can demand nothing.

You are confusing demand with desire. They are definitely not the same thing.

“You are dreaming of course. Nothing will ever be done about climate change. Those graphs will nosedive after production starts to fall because of depletion and not one day before.”

Hi Ron,

I agree that it is likely that little will be done about climate change, it is unfortunate that people do not believe science. Depletion will help a little because the rise in prices may make wind and solar more competitive, but there may be enough coal to do serious damage. Hopefully Dave Rutlege’s estimates of the coal resource prove to be correct, maybe we can keep World temperature change less than 3 C, it all depends on how cheaply the coal can be mined relative to wind and solar prices (as we start to approach the limits of cheap coal.)

DC

I think the explanation of “peak oil” as “what has happened repeatedly in oil basins and countries, will surely happen in the world as a whole” is a weak description of what we learned from the oil fields and countries that peaked. Clearly the world as a whole will peak under different economic conditions than many of the fields or countries studied before. But it will peak, and it will decline, that is what we learned.

As far as predicting the timing and shape of the world production curve; There really isn’t a good historical example for this. If it were just geology it would be a lot easier, but the economics really complicate it. Here is a thought I have been considering. We look at the current world production and we see the increase in US LTO. How come we are the only one’s really trying? Brazil – not really trying; Kaz – not really trying. Russia – not really trying. I seriously doubt this. I think everyone around the world has seen the same thing we have – good, stable, rising oil prices for the last several years. I think everyone is trying their damnedest just like us. With what every drills they have, credit they have, geology they have, tech, water, etc, etc. I belive when the peak hits (along with economics) it will hit hard and bight deep, but that is just me. I don’t understand the fed / EBC so don’t ask me when. Never turn your tv off so you don’t miss it!

One thing I’ll credit to Maugeri the insight that fracked LTO is a whole ‘nother animal than conventional oil found in “reservoirs.” In fact each fracked well is it’s own reservoir, it’s own little universe that extends only as far as the fractures. It isn’t a big pool that takes years and billions and corporate handholding of the local government ministers, it’s something done by little companies on a well by well basis in conjunction with landowners, which is why the big companies including Nationals, aren’t doing so well – they’re built for big pools of oil.

In the US unlike most other countries, the mineral rights are private property, there are regulations in place and there are hundreds of little Mom & Pop outfits. None of that exists elsewhere, or at least not on our scale. Even more, half the rotary rigs in the world are in the US and most of them are drilling horizontal (1,300 of 3k +/- total in the world).

If peak happens at the midpoint, the second half still has to come from somewhere. It’s not that LTO won’t happen elsewhere, it might, it’s just that like all the x-heavy, kerogen, shale, blah, blah it won’t happen fast enough to offset conventional declines.

Just my 2¢

:^)

Hi Ron,

You are right on of course about conservatives denying climate change, etc., to a far , far greater extent than liberals.

But my point is that all sorts of people tend to believe in things that are simply impossible.

I know a number of politically and personally liberal fundamentalist Christians on a first name basis- meaning they are fundamentalists in the sense they believe in the book of genesis and eternal life, and so forth. Two of them are lesbians who created a rift in my family church such that my parents switched their membership to another church, depute the fact that their own parents and my dead siblings are buried at the old church.

They vote the straight democrat ticket, etc. they are liberals if i ever met one- and i have proof around here someplace that i used to be a card carrying liberal myself, ibn the form of membership documents in the National Education Association , the Operating Engineers, and the AClu- all expired of course.

So I guess I know a liberal when I meet one, and the don’t come any more liberal than these two ladies.

But so far as you and I are concerned- both of us being scientifically literate- they are as ignorant ads fence posrts, are they not?

Both liberals and conservatives tend to believe in the eternal growth economic growth model.

Harvard University, which is generally considered a vey liberal institution for a specific instance, recently allowed that bau mouth piece Mageuri – (I hope that is spelled close enough to correctly to identify him-) to use the name and rep of the U to push an “oil in plenty” scenario for the next forty years or longer .

It’s been republished in essence or favorably reviewed hundreds of times, maybe thousands of times, by now, by just about every liberal leaning website I have bookmarked- and I have a a dozen or more of the more influential ones on my regular reading list- such as the NYT and the Guardian.

I don’t think there is a single leading liberal politician anywhere who doesn’t hold to the eternal growth paradigm.

I believe this is evidence enough that so called liberals are just as capable of deluding themselves as so called conservatives.

I guess I’m just deluding myself , since the rest of the world insists that republicans and conservatives are one and the same, but I will cling to the traditional definition of the word, as it is used by an engineer.

Conservative or liberal , the label doesn’t matter, you can’t think effectively unless you are well informed . I try to be well informed.

Anybody with the basics of a science based education can easily understand global warming as the threat it is, and the causes of it.

The only reasonable course of action when threatened with a disaster is to do what you can to avert it- this is just basic thinking, in the real sense of the word- better safe than sorry!

My position as an informed and scientifically literate conservative is that we should be on a war footing in respect to expanding renewables and conservation, for the same reason I believe we need to maintain a robust military establishment. Both policies are in my estimation in the best long tern interests of the country, and for that matter, probably the whole world.

The word “conservative” has been hijacked of course, for political ends, by people who aren’t really conservatives, except by the current day political definition.

This is just a Don Quixote thing with me- I know I’m tilting at a windmill, but I don’t intend to give it up.

For me this is something like the inexcusable ( to me ) improper use of the economic terminology of supply and demand, when somebody says “supply is inadequate to meet demand”.

Supply is not defined as the amount people want to buy at the price they want to pay.

Supply is the quantity sellers will bring to market at a given price, everything else held equal.

Real conservative thinking is based on fire prevention rather than fire fighting.

You and I believe we are already in overshoot, based on a shared understanding of physical realities , and therefor headed for a very hard crash.

Now my take on this as a “conservative” is that we should be doing what we can to soften the landing to the extent possible.

So- as I see it- a conservative thing to do, now, for an example, , is to switch to a european style health care system- because while doing so has some serious drawbacks, I believe the consequences of failure to do so will be catastrophic in terms of civil and economic troubles if we don’t.

Anyway, looking after your fellow man in need is the right thing to do, so long as it is done using some common sense.

Euro health care won’t stop collapse, but it will slow it down some, and buy some time- time enough maybe for me to be safely dead of old age, lol.

I don’t want this country to go socialist to any greater extent than absolutely necessary, but on the other hand, I don’t want a French Revolution either.

I don’t have much, but it might be enough somebody decides to feed me to the guillotine.

As always, please excuse my one finger cross-eyed typing. There doesn’t seem to be an edit function.

Does Bacon’s profound quote also apply to you, Mr. Patterson?

I desire this to be true Mr. Coffee. I desire that I, my children and grandchildren would live in a world of beauty, peace and abundance. And that they would live that way long after my death. That’s what I desire to be true. I deeply wish that I could believe that to be true.

So yes I do, I desire to believe what I desire to be true. But I simply cannot.

“How come we are the only ones that are really trying?” That is a very, very great question that – in seeking the answer – may shed a great deal of light on the whole oil supply issue, particularly in regards to LTO. Until just five years or so ago, there was minimal success in effectively extracting oil from the Bakken shale play. The continuing evolution of well completion/stimulation is enabling far, far more oil to be recovered at the outset and ultimate recovery.

These procedures are just now starting to be emulated on a global scale, but – as every shale play worldwide has unique characteristics – there will be a learning curve prior to Argentina, China, Australia and others successfully extracting their own, vast, known hydrocarbon reserves.

Well and sincerely expressed, sir.

Some hesitant cheer leading… followed by a dose of reality…

Alberta’s Duvernay lands: North America’s next big shale bonanza?

Jeff Lewis | Financial Post| 28/11/13 | Last Updated: 28/11/13 4:31 PM E

“The Duvernay is about as interesting and exciting a play as I’ve ever seen,” Hal Kvisle, Talisman’s CEO, said in a recent interview.

The formation may hold 443 trillion cubic feet of natural gas, 11.3-billion barrels of natural gas liquids and 61.7-billion barrels of oil, according to mid-point estimates by Alberta’s Geological Survey.

But it’s also expensive. Numbers vary depending on the operator, but well costs range from $10-million as high as $20-million, making it harder for smaller companies to compete, analysts say.

…

Encana, which this year added 21,000 acres of Crown land to its Duvernay position, is targeting per well costs of between $12-million and $18-million in the region once it begins full commercial production, a spokesman said via email.

…

Even so, the high costs of sinking a well in the formation “make near-term profitability an issue and mean that deep pockets will be required to develop Duvernay, which we are not sure that Encana has,” Bernstein Research analyst Bob Brackett said in a recent note. A lack of processing infrastructure in the region could also constrain development, analysts at Peters & Co. say.

The Duvernay: North America’s Most Profitable Condensate Play?

by Keith Schaefer, Oil And Gas Investments Bulletin, on November 12, 2013

In the two years prior to these results, the industry had spent well over $2 billion developing the play—and there was literally just one well in the public domain, by Athabasca Oil, that gave me any reason to be excited.

But now —it could quickly turn into the Eagle Ford of Canada.

Kind of an endorsement, I suppose. Sure is expensive to produce though.

Alberta Voices, albertavoices.ca, has a series on fracking’s impacts in Alberta.

The Campbells: Alberta Ranchers

Methane migration into drinking water aquifer

Hat Tip: resilience.org

“The shallowness of the average human’s knowledge line is the fundamental problem. This amounts to profound ignorance of how the world works compared with the amount of knowledge available and needed to understand the world. It leads human beings into over reproducing, over consuming, and over trashing of the planet. Even when they hear that, for example, our activities are polluting the world, they can process the words, but not the deeper meaning. They do not understand how things are connected and their depth of any knowledge is abysmal. And that is why they fail to act on the information. They do not really understand.”

-George

For my information- will somebody please set me straight on what the working definition of condensates is, as opposed to crude and natural gas?

I know my basic organic chemistry, and that the very lightest molecules such as ethane and methane are the most common by far natural gas fractions, and that anything with more than some certain number of carbon atoms would always be considered crude oil.

But where the cutoffs are between crude , condensates, and ng I do not know.

I’m looking for a definition that would make sense to a person who knows no chemistry.For instance, ng must be contained in a pressure vessel of some sort or it disperses very quickly. It can be liquified only if both pressurized and refrigerated to a very low temperature.

Most crude oil, to the best of my knowledge, will sit in an open container and evaporate but rather slowly at ambient temperatures.Some heavy crudes probably evaporate so slowly that they could be stored in an open container for a long time, maybe indefinitely.

Must condensates be pressurized to maintain them in a liquid state at ordinary ambient temperatures?

I’m presuming that as a practical matter, most condensates come out of wells producing mostly natural gas – the condensate molecules being any and all that are too big and heavy to remain in solution with the natural gas and interfering with storing and shipping it, and maybe with burning it too. A liquid droplet won’t pass thru a tiny gas nozzle orifice without potentially causing problems such as smoke and soot.

But it seems that some crude oils contain a lot of condensates to0- implying that “condensates” are too volatile to stay in solution with the remainder of the oil, the non condensate portion, at normal working temperatures and pressures in storage perhaps?

I know why they are very valuable – due to use as diluents in transporting heavy oils and as feedstocks which make the processing of heavy oil into lighter finished product much easier.

You can find several definitions on the web but here is the one from the EIA and another one that tells exactly what lease condensate consist of.

EIA Glossary Condensate

Condensate (lease condensate): A natural gas liquid recovered from associated and non associated gas wells from lease separators or field facilities, reported in barrels of 42 U.S. gallons at atmospheric pressure and 60 degrees Fahrenheit.

Lease Condensate

A mixture consisting primarily of pentanes and heavier hydrocarbons which is recovered as a liquid from natural gas in lease separation facilities. This category excludes natural gas plant liquids, such as butane and propane, which are recovered at downstream natural gas processing plants or facilities.

Pentane has five carbon atoms and 12 hydrogen atoms but condensate also contains some longer polymers. Lease Condensate is primarily Naptha. Naptha contains anywhere from 5 to 12 carbon atoms in polymer strings.

Gasoline, or octane, contains 8 carbon atoms. So condensate does contain some octane.

But condensate is basically the liquid that condenses out of natural gas at atmospheric pressure and room temperature and contains from 5 to 12 carbon atoms. But as the definition reads it is mostly pentanes or the shorter polymer strings.

In regard to the “Shale Oil Revolution” spreading around the world, Ron reports that the average Bakken Play well produced 135 bpd in the first half of 2013 (which is down from 2012). If we are looking at 100 to 150 bpd or so average per well production while the overall production from the play is still on the upslope of a production curve, how is this supposed to work in much higher operating costs areas like Siberia and the Middle East?

Also, my guess it that at least 90% of currently producing US shale/tight oil wells will be plugged and abandoned or down to the vicinity of about 10 bpd or so 10 years hence, in 2023.

I frequently cite the example of the 2007 vintage Barnett Shale gas wells on the DFW Airport Lease that Chesapeake asserted would produce for “At least 50 years.” 50 months turned out to be more accurate. In early 2013, about half of the 2007 vintage wells were already plugged and abandoned, and overall production from the 2007 vintage wells was down by 95%.

“If we are looking at 100 to 150 bpd or so average per well production while the overall production from the play is still on the upslope of a production curve, how is this supposed to work in much higher operating costs areas like Siberia and the Middle East?”

If initial production for the first month is 500 bpd, falling by the end of the year to 250 bpd, that’s an average of 375 bpd for the entire year. X 365 = 136875 barrels X $100 = $13.7 million. That’s more than the quoted cost of wells in the Bakken, but not by very much. Multi pad drilling is bringing that cost down, but it would be a couple of years before the equipment to do that would become the norm in Siberia.

Production in year 2 would be down less than 50%. Call it 30%, which still yields about $9 million in revs. Lets take it down another 20% in year 3 and we make $7 million. Beyond that we get unknown inflation stuff going on. It would also be useful to know the cost of an already completed and ongoing producing well. It ain’t free.

But overall I see about $30 million in revs in 3 years. I would have to generally agree that this ain’t much of a profit ratio if a lot of holes are dry.

For starters your numbers are wrong. The average first month production is 400 bp/d not 500 bp/d, or was at the end of 2012 according to David Hughes. And the decline rate is 70% the first year not 50%. That is according to many sources, the latest being Roger Blanchard.

So initial production of 400 barrels per day falling to 120 by the end of the first year. Now do your math over and see what you come up with.

Hi Watcher,

I agree with Ron that 500 b for the first month is probably higher than the average Bakken well, my model is even less than the 400 b estimated by Hughes, to get the best match with the actual output data for the number of wells added each month (334 b). Output for the first 12 months is about 84 kb. Now remember that according to Rune Likvern’s analysis about $7/barrel goes to operating and financial costs, $12/ barrel goes to transport cost and royalties and taxes are 26.5% of wellhead revenue (refinery gate price minus transport cost).

We will use your estimate of 137 kb for year 1 output and assume the refinery gate price is $100/barrel. That’s 13.7 million of total revenue before we deduct transport costs(1.644 million), operating and financial costs (0.959 million), and royalties and taxes (3.195 million), which leaves

7.9 million. The proper way to do this is to discount the cash flow in inflation adjusted terms using an annual discount rate of 10 to 15 %, I use 12.5 % in my models. The wells are profitable in the sense that they break even and pay back the initial cost of the well in real dollars after 5 years if we use the assumptions above and a well cost of 9 million dollars at a refinery gate price of $92. If average well costs fall to 8 million dollars the breakeven price falls to $84, at 7 million breakeven is 76 dollars per barrel. In every case above we have assumed no decrease in average new well EUR, as the sweet spots get drilled up, new well EUR will decrease and breakeven prices will increase even with falling well costs. I assume this fall in new well costs (in real dollars) will stop at 7 million in my models.

DC

Aloha Fred,

The IEA is merely disputing the prospect that the curve of worldwide aggregate oil production will be a classic Hubbert curve. The Hubbert curve has been found over and over, but until recently, only in countries that were competing with other low cost producers. The IEA is asserting that the shape of the world production curve will be distorted by changing economics of production, and that the “peak” will be much longer and flatter than the classic Hubbert curve.

What is not said, but implied by such a scenario, is that the decline after a long, flat peak will be much more rapid than the Hubbert curve. This because more and more production will have been shifted to geology that has very high decline rates, LTO in the Bakken for example.

But the IEA projections also assume that the world economy and financial system will be able to accommodate continuously increasing prices and continuously declining per capita production. It’s only a hunch, but I don’t think BAU can last until 2035. When public confidence in BAU finally fails completely, the economic disruption will make the production decline very steep indeed.

Mr. Brown, I am a big fan of your work. Thank you, Mr. Patterson for the venue to comment.

I am not an engineer but must play one in real life every day as an operator. I understand the concept of exponential versus hyperbolic decline curve analysis pretty well but only so far as that relates to conventional reservoirs like those I produce from. I struggle with how a “self-contained” drainage radius in a dense shale will perform over time once induced frac energy, and subsequent solution gas energy is dissipated. All these long fat tails on tight oil type decline curves puzzle me greatly.

So my instincts after 50 years of producing the nasty stuff is similar to yours, I believe, Mr. Brown; who is to say that a tight oil well’s decline won’t actually be almost linear, straight down in the dirt? I simply cannot get my arms around the idea that these wells will decline to 15-18 BOPD and do that for 25 years. What’s the drive mechanism for that? In very tight Taylor age sandstones in S. Texas with millidarcies of permeability when the energy was gone, so was the well. These shale wells have nanodarcies of perm.

Is there an example of a tight reservoir in the world where essentially only the frac radius is drained that has a hyperbolic, 30 year decline?

Hi Mike,

I believe the Bakken has been producing since 1953 and those early wells can be modeled fairly well with a hyperbolic model with a b exponent of about 0.5 and a 30 year EUR of 156 kb, these older wells were mostly vertical wells and had a much lower EUR than the current wells which are projected to average about 390 kb over 30 years. I agree however that this well profile is optimistic (with a b exponent of 1.0, the so called harmonic decline profile).

qi=10500 b/m, b=1,Di=0.097 hyperbolic model. A more realistic model would use the hyperbolic model for the early years and then exponential decline after x years of y % per year. What would your guess be as to x and y? I was thinking x=10 years, y=7 % per year, your thoughts would be of great interest because your gut sense is probably much closer to the truth than my guesses.

For the early Bakken wells it looks like they may have been lasting 20 to 30 years on average, I looked closely at producing wells over time and if wells are dropped off the producing list after 25 years (up to 1997) to 30 years (after mid 1997) to determine wells added each month I get the best model estimate as it is the number of new wells added each month that matters (change in producing wells plus change in number of abandoned wells).

For 1955 to 2005 we use the following well profile:

The following is not a bad model match to the data considering we have used a single average well profile over a 50 year period:

Dennis Coyne

Re: Watcher,

And actually the median production rate would be more representative of middle case expectation for current production in the Bakken Play. For example, if 11 operators each had one producing well, with one producing 1,000 BOPD, and with 10 producing from 10 to 100 BOPD in 10 BOPD increasing increments (10, 20, 30 etc.), the median production rate would be 60 BOPD, while the average would be 141 BOPD.

So the production distribution would look like this for the 11 wells: 10, 20, 30, 40, 50–60–70, 80, 90, 100, 1000.

The 141 BOPD average would be mathematically accurate but highly misleading. The median would be far more representative of a more likely production rate for a given operator.

Oh look! Replies are indenting.

BTW, y’all do realize the subject was Siberian shale, whose sweetspots have not yet been touched — so even though Helms clearly said far above 400 in September’s Dir Cut, it’s not relevant to the Brown quote.

Really Watcher, Siberian shale production, if it ever happens, has to be at least a decade away. Remember roads have to be built to every wellhead in order to carry those very heavy fracking fluid trucks. Building roads in the Siberian Tundra is another matter altogether, very expensive and very seasonable. So let’s don’t start counting those Siberian chickens before the eggs have even been laid.

Oh well, no indentation.

“We will use your estimate of 137 kb for year 1 output and assume the refinery gate price is $100/barrel. That’s 13.7 million of total revenue before we deduct transport costs(1.644 million), operating and financial costs (0.959 million), and royalties and taxes (3.195 million), which leaves

7.9 million”

One small comment on the subject. Are your calculations including the cost of dry holes? I presume there are enough dry holes or holes with low output that have a significant role in cash flow.

“On average, peak month production from the Bakken has risen on average to 465 bpd per well in the first half of 2013 from 450 bpd in the second half of 2012, IHS estimates.”

Mike,

It seems to me that the Austin Chalk would be a pretty good analogue case history for the long term production profiles from fractured tight reservoirs. As an example of production from high porosity and high permeability reservoirs, I frequently use the example of the updip wells in the East Texas Field, which in 1972 were capable of making virtually the same amount of oil that they could make in 1932.

And as noted above, an important point about the “Shale Plays Saving the World” theme is that median production rate is almost certainly much lower than the average production rate, while operating costs would be generally allocated on an average basis. Bottom line is that in very high cost operating areas, a lot of whatever the “tail” of low volume production might have been will be severely curtailed because of high operating costs. It’s just mind boggling to me that the conventional wisdom now seems to be that thousands of wells headed toward stripper well status will keep us on a virtually permanent upward slope in production.

Some thoughts on “Net Export Math.”

I used to say that perhaps 0.1% of the people in the world had some understanding of “Net Export Math” (partly because it fit well with my Titanic Metaphor, i.e., maybe three people at midnight on the Titanic, about 0.1% of the people on board, understood that the ship would sink).

However, on some days I think that it’s more accurate to say that a few dozen people may fully understand the math.

It’s a lot of things–multiple data bases, multiple exponential functions, a counterintuitive conclusion, and a general disinclination–even among most Peak Oilers–to accept the ramifications.

In any case, I’m working on simplifying my update to the Export Capacity Index article, and I’m thinking of slides showing four key values–Production, ECI Ratio, Annual Net Exports and Remaining CNE (normalized to index years)–for the ELM, Six Country Case History and global data.

Some info follows.

SIX COUNTRY CASE HISTORY

Normalized Production, ECI Ratio, Annual Net Oil Exports and Remaining Post-1995 CNE by year (1995 values = 100%), for 1995 to 2002 For the Combined Production and Consumption Numbers for the Six Country* Case History:

2002 Values:

Production: 93%

ECI Ratio: 83

Net Exports: 65

Remaining CNE: 16**

*Six major net oil exporting countries that, since 1980, have hit or approached zero net oil exports, excluding China: Indonesia, UK, Egypt, Vietnam, Argentina, Malaysia

**Estimated Remaining Post-1995 CNE, at the end of 2002 was 31%, based on extrapolating 1995 to 2002 rate of decline in the ECI ratio

Here is a link to a draft of the Six Country slide:

http://i1095.photobucket.com/albums/i475/westexas/Slide1_zpsf0483ede.jpg

Note that as Six Country production increased by 3% from 1995 to 1993, remaining Post-1995 Six Country CNE fell by 40%.

GLOBAL DATA

Normalized Production, ECI Ratio, Annual Net Oil Exports and Remaining Post-2005 CNE by year (2005 values = 100%), for 2005 to 2012 For the Combined Production and Consumption Numbers for the Top 33 Net Oil Exporters in 2005 (Global Net Exports, or GNE):

2012 Values:

Production: 102%

ECI Ratio: 87

Net Exports: 96

Est. Remaining CNE: 79

Based on the 1995 to 2002 rate of decline in the Six Country ECI Ratio, the estimated post-1995 Six Country CNE depletion rate was about 17%. The actual post-1995 Six Country CNE depletion rate was 26%/year from 1995 to 2002.

Based on the 2005 to 2012 rate of decline in the Global ECI ratio, the estimated post-2005 Global CNE depletion rate was 3.4%/year from 2005 to 2012.

The fundamental irony about the post-2005 situation in OECD countries is that they are stimulating their economies like crazy, in effect propping up oil consumption, and contributing to an increased CNE depletion rate.

Definitions:

ECI (Export Capacity Index) Ratio = Ratio of Total Petroleum Liquids Production (plus other liquids for EIA data) to Liquids Consumption

CNE = Cumulative Net Exports of oil

Six Country Case History: Indonesia, UK, Egypt, Vietnam, Argentina, Malaysia

ELM = Export Land Model

Should read: Note that as Six Country production increased by 3% from 1995 to 1998, remaining Post-1995 Six Country CNE fell by 40%.

Thank you, Mr. Brown.

I participated (lost my shirt) in a number of Austin Chalk efforts; in that case we were dealing with naturally fractured carbonate and the theory was that frac’ing would simply connect those natural fractures and improve well bore conductivity. I am still very confused as to how these dense shale wells with permeability of concrete will perform 10 years from now. You imply they may be all stripper wells and I don’t understand the drive mechanism that will keep these wells producing ANYTHING after a certain point. We assume, rather the shale oil industry assumes, these shale wells will act like conventional reservoirs and produce for 25 years. I am trying to understand how. At one point I thought drilling laterals with the heel downdip would allow gravity drainage but they now seem to be drilling laterals with heel to toe northwest to southeast.

My fairly learned observation about the Chalk is that fewer than 2% of those wells drilled in the early 80’s produced more than 15 years after birth. When the play was revitalized in the 90’s by drilling laterals thru the natural fracture system, fewer than 10% of those wells lived 15 years later. It is a good analogy.

So, if over half the expected EUR from a shale well is to be recovered after the first 4 years of production life, over the next 25 years, I ain’t buying it.

I am a little off topic, thank you for the leeway.

Techguy

I don’t have any data on dry holes. So these are not included in my breakeven calcs.

DC

Hi marmico

That is possible. My model tries to use single well profile to match ndic data from 2008 to the present.

If the ihs estimate is correct the well profile matching the data with first month output at 465 b would need a steeper decline to fit the data.

I think a lot of drilling in 2012 may have been to hold leases. In 2013 the focus shifted to the sweet spots and this would explain the ihs finding. At some point the locations start to run out. Some suggest down spacing as a solution. I think eur per well goes down under that scenario.

DCoyne

So let’s don’t start counting those Siberian chickens before the eggs have even been laid.

Sure. But parts of the Bahzenov shale oil formation lie below producing Western Siberia conventional oil fields. Think of the Bazhenov as the Three Forks of the Williston Basin; a deeper geologic formation that could be extracted with the benefit of already in-place infrastructure.

Mike,

To clarify slightly, my guess is that at least 90% of currently producing shale oil wells will be plugged and abandoned, or down to 10 BOPD or less, in 2023.

Globally, the $64 Trillion question is to what extent the past 10 years of GNE/CNI data are indicative of the next 10 years. Two key charts:

GNE = Global Net Exports of oil, i.e., combined net exports from (2005) Top 33 net oil exporters (EIA, total petroleum liquids + other liquids)

CNI = Chindia’s Net Imports

Normalized Liquids Consumption for China, India, Top 33 Net Oil Exporters and the US, 2002 to 2012, Versus Annual Brent Crude Oil Prices:

http://i1095.photobucket.com/albums/i475/westexas/Slide14_zpsb2fe0f1a.jpg

GNE/CNI Ratio, Extrapolated to 2030:

http://i1095.photobucket.com/albums/i475/westexas/Slide1_zps9ff3e76d.jpg

Incidentally, given the above the working number I use for oil prices is that in my opinion, in my little corner of the Oil Patch at least, we are likely to see a minimum wellhead oil price of at least $100 per barrel, after all royalties and costs, in constant 2013 dollars, over the life of the property.

So, a 10% working interest in a one million barrel field should provide an undiscounted constant dollar cumulative net cash flow of at least $10 million to the 10% working interest.

Old TOD hands will remember me a s a frequent commenter convinced we are in overshoot biologically and economically , and that we are headed for an inevitable hard crash at some point within the foreseeable future.

I haven’t changed my mind on this point, but I am no longer convinced the crash will arrive within the next three or four decades.

The economy has proven to be far more resilient that I expected, in respect to energy prices, and conservation efforts are being ramped up faster than I expected, at least on an individual per capita basis in the richer countries where most consumption occurs.

Furthermore I think the tipping point at which actual non subsidized wind and solar power become cost competitive with fossil fuel sourced electric power is closer than most people expect, and that the business community will jump into wind and solar in a an extremely big way comparable to past booms in housing and technology. I well hazard a guess that this will come to pass within ten years.

I also believe that the actual cost of using coal and natural gas will rise far faster than most people anticipate.The wholesale price determines what is extracted, of course but the delivered price determines what is bought , and between the two, there is often an enormous difference.

IIrc, Wyoming coal that was selling for about twelve dollars a ton a year or so ago cost , after delivery to the state of Georgia, about five or six times as much.

It seems likely that a lot of heavy new taxes will be levied on energy producers and consumers alike , as I see things, for two simple reasons.

Governments everywhere are starving for revenue, which is reason enough to believe producers cannot forever escape higher taxes; and higher taxes are well known to sharply reduce consumption in energy importing countries, which are already having a hard time coming up with the means to pay imported energy.

Conversely rising energy prices provide a powerful incentive to consumers to reduce consumption and adopt a more energy frugal lifestyle, and for business to change their energy hog ways. It used to cost three or four dollars an hour to fuel an eighteen wheel road truck and ten to twenty dollars and hour to hire somebody (non union) to to drive it.Now it costs thirty dollars an hour to fuel such a truck, whereas given the slow economy, truck drivers are lucky to make twenty dollars an hour.

Every heating and air contractor big enough to maintain inventory has heat pumps in stock but nobody keeps an oil furnace in stock these days- you have to order an oil furnace a week ahead to allow for shipping it from a factory warehouse.

Now there is nothing original or new in those observations, but I think that the implications of all off them ,and others that I could make of a similar nature, taken together, are going to have an impact on energy use, and therefore on peak oil, that is considerably greater than the sum of the parts.

My feeling nowadays is that this impact is going to be greater than most of us in the peak oil camp anticipate, and that it will result in the oil production curve having a much wider and flatter peak than I anticipated a few years ago.

Having said this much, I also believe that current mainstream estimates of economically recoverable natural gas and coal are likely . But this may paradoxically also result in a a wider and flatter peaks of production of coal and natural gas .

My reasoning is first that as time passes, the cost of producing all three ff’s must inevitably rise; second, that industry and consumers will be able to adapt to rising prices faster than anticipated by most observers, thereby allowing the economy to avoid a collapse or very deep depression due to high fuel prices.

We may find we can manage oil prices of one hundred fifty dollars per barrel, in present day money, tern years frown now, as well we manage 100 dollar oil today. it will be possible to produce a lot more oil at 150 than it is at 125 or 130 dollars, which is a price often mentioned in future forecasts..

Our elderly 97 buck uses only a little more than half as much gas as the vehicle it replaced, a 67 Ford, but it is as large, and more comfortable, and quite fast enough for any legitimate need short of delivering moonshine.

I expect a similar size 2017 Buick to run on no more than two thirds of the fuel the 97 needs.

I have very little money, compared to most of the American people, but I am never the less going to install a heat pump this spring to replace the high efficiency kerosene fueled furnace we use for back up heat ( I burn wood cut on the place, no hauling, no middlemen involved) with a heat pump this year once our current supply of four dollar kerosene is used up.

Again there is nothing new here, but I think most people who think about these things tend to overlook the synergistic effects- the positive and negative feedbacks involved.

Peak oil properly and strictly defined is probably very close at hand, but the actual grand scale ill economic effects of it are probably farther down the road than we think.

Insofar as bau and energy are concerned, things are probably more stable than they look.

Thanks again, WT; the CNE/CNI ratio you predict should scare the britches off of everyone. I am good with your minimum well head price going forward, though on the short term, in my patch, all the oil hotels in Houston are putting up no vacancy signs; it is difficult to term up anything at the moment. Short of an ME issue in 2014 I look for WTI plus to be going down, down. Lets see what that does to the shale rig count.

Mr. Harold Hamm has presented his own view of Peal Oil:

http://usnews.nbcnews.com/_news/2013/11/04/21266984-meet-harold-hamm-the-billionaire-behind-americas-great-renaissance-of-oil?lite

NBC love his POV: “You’ll find no better guide to the new world of energy than Hamm. ”

“This month Continental Resources told investors that the region (The Bakken) contains enough recoverable oil to double the official count of U.S. reserves and enough “oil in place” to meet the nation’s needs for hundreds of years. While those claims have not been verified by regulators, Hamm’s track record makes them hard to doubt. ”

“The peak oil people have all been wrong,” he said. “They completely missed the boat.” Since reaching a high of 60 percent in 2005, net oil imports have fallen by nearly half and domestic production is at a 25-year high and rising faster than ever.

“By 2017, America is expected to be “all but self-sufficient,” according to a report by the International Energy Agency, which attributed this “profound” change to the Hamm-led rebound in oil and gas production. Yes, the nation still uses more crude than it pumps but when the IEA factors in liquid natural gas and biofuels, the balance swings in favor of energy independence.

“It’s tremendous,” said Hamm, who expects to more than triple his own production by 2017.

“We have more oil than the Saudis,” he said. “So we need to develop it.”

Plenty more where that cam from at the linked article.

The thing is, both Mr. Hamm and Peak Oil-aware people are right…the who is right part is time domain dependent. If the governments can keep the QE extend and pretend train moving ahead for another decade or so, maybe World PO won’t occur until ~~ 2025-ish. Oil will continue at today’s prices to maybe as high as a buck-fifty a bbl, and a gradually declining BAU may persist for another decade or two.

Too bad people and governments will continue to use the time they have had (since 1972…or 1956…or the time of Malthus) to restructure their societies and lives to adapt to the coming new reality.

Edit: Too bad people and governments will NOT continue to use the time they have had…

ND new production is delayed by winter weather. Wonder how Siberian winter weather would delay shale production in the Bazhenov?

Re: Hamm

(The US has) “enough “oil in place” to meet the nation’s needs for hundreds of years.”

There is of course, a “slight” difference between oil in place and recoverable reserves, especially in tight/shale plays, but if we focus on crude oil (C+C), the US processes about 15 mbpd (5.5 Gb/year).

So, over a 200 year period, we would need recoverable reserves of about 1,100 Gb to meet current US refinery demand for crude oil, from domestic sources. This would be equivalent to the EUR from about 50 North Slopes of Alaska.

Citi Research puts the decline rate from existing US gas production at about 24%/year. For the sake of argument, let’s assume that US hits a crude oil production level of 15 mbpd in 2020, and let’s assume that the decline rate from existing oil production stabilizes at 20%/year. Based on this metric, we would need about 3 mbpd of new production every year to maintain a 15 mbpd production rate. So, over a 200 year period, we would need to put on line the productive equivalent of 600 mbpd of new production–about eight times current global crude oil production, in order to maintain a crude oil production rate of 15 mbpd for 200 years, based on above assumptions.

Jeffrey,Ron, and all the ones ‘doing the PO math’:

Thank you for you diligent research and patient repetition of your findings.

Unfortunately, as long as U.S C&C production keeps rising, and World C&C production fluctuates around a plateau, and as long as the ‘all liquids’ obfuscation continues to be effective, the PO aware people will patiently play the role of Cassandra.

Even after the data irrefutably shows a year-after-year decline at some point down the road, the memories of the shale/LTO ‘revolution’ may keep the dream alive (of discovering another wave of oil) for many years…with the people’s ire being directed at the cast of usual claimed culprits..big government regulation, socialism, and environmental madmen bent on destroying our greatness.

All that being understood, please keep discerning and posting the facts of the matter…I have found pealoilbarrel and ourfiniteworld be be worthy sites for a decent description of Peak oil/peak finance reality. I took the red pill, and I am at peace with reality.

The “we have more oil than the Saudis” stuff is probably more disquieting than anything else. Way too much US centric corruption of dispassionate evaluation there.

As for the day the decline is reported having impact . . . if you read a bit about Scotland’s maneuvering to become independent of the UK, you’ll see an outright avalanche of claims that the UK’s (Scotland’s) portion of North Sea production can ramp up if one only has the right tax policy and sufficient “investment” (aka frantic drilling), the royalties from which will fund the new independent country (the UK is supposed to just let them take all the wells without recompense, you see) and lead to a bright future away from the jack booted heel of London. When you need votes, you say things like that and no one ever got elected telling people their lifestyle decline is forever.

Similar stuff in Argentina. The socialist government there was/is dependent on oil royalties. Repsol couldn’t profitably do the frantic drilling to ramp production. Argentina got paid with production royalties and cared not a bit about the profit of who produced the oil. So Repsol stopped drilling and Argentina went crazy and nationalized the YPF segment. The latest? A deal with Repsol to provide recompense for the grab, with Repsol making an undefined commitment to develop Argentina’s shale. Once again, no way in hell that government could continue to tell the people they are out of oil and the future is dim. Instead, hope.

Farmer Mac Wrote:

“Furthermore I think the tipping point at which actual non subsidized wind and solar power become cost competitive with fossil fuel sourced electric power is closer than most people expect.”

My Opinion differs, because Wind & Solar cost estimates do not include the infrastructure needed to make it economical. Both wind and solar are intermittent power sources while demand is not. Power producers need to back them with N Gas or or other base load power plants handle sudden power dips when the wind drops out or if cloud cover block solar plants. Solar also only works about 5.5 hours (at full capacity) a day when factoring in weather (overcast, rain, snow, etc) and night or when the sun is low on the horizon. Most solar installation are very high maintenance as the panels or mirrors need to be cleaned frequently as dirt collects on their surfaces. Its likely that we are probably past peak or near peak solar & wind development.

Perhaps if we invested in alternative energy solutions 25 or 30 years ago there might have been a chance. However we are well past the prime as the US economy continues to slide. There is simply not enough resources and time to make any transistion away from fossil fuels.

“Governments everywhere are starving for revenue, which is reason enough to believe producers cannot forever escape higher taxes; and higher taxes are well known to sharply reduce consumption in energy”

Raising energy costs cuts gov’t revenue as energy consumption is required for commerce. The less energy that is consumed the less economic activity will occur leading to declining tax revenue. I have no idea why gov’ts in the West (US and EU mostly) are making energy more expensive. Any cut backs in consumption simple move consumption to Asia. We are not husbanding the remaining energy supplies, just shifting who consumes it.

In my opinion the US is making a terrible mistake on its war with Coal. The US gov’t is betting the farm on a long term supply of N.Gas to provide energy, and we know its just a mirage. Sooner or later N. Gas is going to become very expensive, and with the destruction of coal fired plants there will be anything remaining to prevent nationwide power shortages the will probably result in a collapse, Electricity is very important to keep our computer controlled infrastucturing running. No electricity and no food distribution.

Cutting CO2 emmissions in the US will not alter or prevent climate change, as Asia will build new coal plants to offset any losses in the US. Further more, often the coal that used to fire to US power plants is now shipped overseas.

“We may find we can manage oil prices of one hundred fifty dollars per barrel, in present day money”

I have serious doubts the world or the US can manage $150/bbl. Its barely managing at $100/bbl. If you look at the US Labor participation rate, its failing. This is partly because higher energy prices are causing job losses. The US is shedding manufacturing jobs overseas because the costs are lower and there are no regulations on energy and minimal pollution regulations. The only job growth in the US is in part time retail jobs, which isn’t sustainable. The EU is utter imploding with unemployment rates now exceeding 20%.

Labor Participation declines

http://www.stlouisfed.org/publications/re/articles/?id=2419

“Insofar as bau and energy are concerned, things are probably more stable than they look.”

Systems are stable right up to the tipping point. However once the tipping point is breached, its almost impossible to stop the disaster. We are a lot closer to the tipping point than we were in 2005-2008.

Hi Techguy,

I am hoping with that name you are an engineering/science type and not just someone who likes technology. Have you seen the study from U Delaware,

http://www.sciencedirect.com/science/article/pii/S0378775312014759

From the abstract:

“We model many combinations of renewable electricity sources (inland wind, offshore wind, and photovoltaics) with electrochemical storage (batteries and fuel cells), incorporated into a large grid system (72 GW). The purpose is twofold: 1) although a single renewable generator at one site produces intermittent power, we seek combinations of diverse renewables at diverse sites, with storage, that are not intermittent and satisfy need a given fraction of hours. And 2) we seek minimal cost, calculating true cost of electricity without subsidies and with inclusion of external costs. Our model evaluated over 28 billion combinations of renewables and storage, each tested over 35,040 h (four years) of load and weather data. We find that the least cost solutions yield seemingly-excessive generation capacity—at times, almost three times the electricity needed to meet electrical load. This is because diverse renewable generation and the excess capacity together meet electric load with less storage, lowering total system cost. At 2030 technology costs and with excess electricity displacing natural gas, we find that the electric system can be powered 90%–99.9% of hours entirely on renewable electricity, at costs comparable to today’s—but only if we optimize the mix of generation and storage technologies.”

If you happen to be an electrical engineer or just a very knowledgeable person, your comments on this study which can be downloaded at the link above, would be of interest.

DC

From your report: Our model evaluated over 28 billion combinations of renewables and storage, each tested over 35,040 h (four years) of load and weather data.

Wow! Now that is a lot of combinations to run, four for every human being on earth. But of course it is a computer model and these days computers run very fast.

But Dennis, it’s a computer model. Things just might work out different in real life. For instance they admit that at any single site the power generated is intermittent. But over a huge area they can manage to get power 90 to 99.9 of the time. But this would mean that all sites would have to be tied together over hundreds of miles. They are implying that even at night, no solar power, that enough wind power would be up at one time to carry the entire load.

Well no, I take that back, that is not what they are implying at all. At night, and at times when there is virtually no wind, they will rely on storage. They say: with only 9–72 h of storage

Ahhhh there is the crux. Just how many hours of storage do we have right now that will store power for that long? Would you believe a big fat zero? There is some water storage but that is already built into the system and hydro power, at any rate would only power a tiny portion of the grid.

But apparently they believe that by 2030 they will have that technology.

The model was run for three storage technologies: centralized hydrogen, centralized batteries, and grid integrated vehicles (GIV), the latter using plug-in vehicle batteries for grid storage when they are not driving (also called “vehicle to grid power” or V2G) [15] and [16]. Wind and solar are parameterized as GW capacity, storage is parameterized as GW throughput and GWh energy storage capability. Storage is additionally characterized by losses in storing or releasing electricity, plus the standby losses while sitting idle.

Okay they have hydrogen, batteries, and vehicles with batteries. That is, if you are not driving your car, you can plug it back into the grid, dumping power back to the grid, where they really need that power.

Dennis, are you really falling for this stuff?

OFM,

You obviously have put a lot of thought into these issues.

I would recommend your post to anyone wishing to gather a spectrum of opinions on the PO issue.

If I knew how this will play out, I would be king of my own beautiful island.

I apologize ahead of time if my following comments are not appropriate/relevant on this blog focusing on Peak Oil issues, but I was motivated to do significant reading recently after engaging in a cyberspat about oil reserves worldwide. I made mention how the recent advances in LENR (cold fusion) may greatly affect all of us in a most positive fashion. Man, did I get hammered as being a kook.

Well, after poring over several hours worth of news coming out of this field in recent months/years, I gotta tell you all that I was astonished. While I will not relate most of the stuff here, you may be surprised (as I was) that NASA, MIT, Lawrence Livermore, Toyota, Mitsubishi – to name just a few – have publicly stated that “anomalous” readings have regularly been observed during experiments. A Swiss company, Stmicroelectronics (50,000 employees, $8 billion/year revenue) has actually applied this past February for a US patent for their LENR device. Most intriguing of all to me is an outfit out of Berkeley – Brillouin Energy. They just received $20 million of “put up or shut up” money to set up their stuff in an unused power plant to crank out the juice for a local utility. Heady stuff if true. We may be on the cusp of a whole new way of looking at and generating energy.

Right! I hear that any day now that the US Congress is going to overturn the laws of thermodynamics. But pity those folks in the rest of the world, they will still have to abide by those silly laws.

I dunno Dennis. Sheesh. Do a test?

Continental says that its 2013 production will triple by 2017. Is that Bakken or the 350 exploratory Three Forks wells? What’s the ratio of wells to benches? I dunno.

The point is that Watcher said 500 bpd, Patterson countered with 400 bpd, Old Farmer Mac “cut and pasted” his 3 year old TOD soliloquy, you counter-countered with <400 bpd and I linked to Reuters which reported IHS at 465 bpd.

My position is that IHS has superior data and algorithms than Watcher, Patterson, Old Farmer Mac and Dennis.

The energy wing of IHS is known as CERA. Daniel Yergin is Vice Chairman of IHS.

Wikipedia: IHS Inc.

IHS Inc. (IHS) is a company based in Douglas County, Colorado, United States.[4]

IHS serves international clients in five major areas: energy, product lifecycle, environment, security and Electronics and Media. IHS provides industry data, technical documents, custom software applications, and consulting services. IHS brands include Jane’s Information Group, Cambridge Energy Research Associates (CERA), and John S. Herold, Inc.

Read this wonderful article by CERA’s chief energy expert, Daniel Yergin:

Congratulations, America. You’re (Almost) Energy Independent.

Daniel Yergin is vice chairman of the global information company IHS and author of The Quest: Energy, Security, and the Remaking of the Modern World.

I know you probably have not been following the discussion about Daniel Yergin but his bubbly over the top estimates and predictions are well known around here.

Hi Marmico,

The number Watcher gave is based on comments by the director of the NDIC Oil and Gas division(Lynn Helms) and his assumption that the EIA’s drilling productivity report gives the correct legacy well decline for the Bakken. Ron’s number is based on research by David Hughes who is a well respected geoscientist who worked for the Canadian Geological Survey for 32 years.

My numbers are an attempt to create a model which matches NDIC output data. Using a lower initial production (qi in a hyperbolic model) requires a lower initial decline rate (Di in the hyperbolic model). The 330 barrel/day first month production gives the best fit to the data over the 2010 to 2013 time period, but I can force my model to use a higher first month production at the expense of a poorer fit over that time period.

For a model with a well profile with 1st month output of 450 b/d and 30 year EUR of 320 kb we get

Compare this to my original model and notice the better fit to the data from 2010 to the present

DC

“The point is that Watcher said 500 bpd”

Nah, I’m nobody and am going to stay that way. I don’t say anything.

The data says things. Right here: http://www.resilience.org/stories/2013-11-22/analysis-of-well-completion-data-for-bakken-oil-wells Just go through it. Most drilling is in the prime counties and they are showing numbers way above 400.

BTW, factoid, I dug into where this guy got his data. The Bakken Weekly looks like an insert on weekends or something in the Bismark Trib. I went through some recent issues. Here’s one with some info:

http://bismarcktribune.com/bakken/bakkenweekly/page-p/page_7d3b3840-c789-5fa2-a5aa-bcc2177fd6e3.html

Page 20 begins a profile of drilling permits and after that well completion data. They are STILL drilling predominantly those prime counties. That looks to me like very constrained geography.

Hi Watcher,

I thought you had done some calculation to come up with 500 b/d for average 1st month production, I probably misremembered(?is that a word?). Note that initial production is the first 24 hours of output and is generally higher than the average for the first 30 days. So the numbers in your link are a little bit of an apples to oranges comparison.

Oil companies use high IP numbers for impressive press releases. A 500 b/m number for the 1st month average daily output is possible, but I think a number like 400 to 450 makes more sense and the best match to NDIC output and well data for the Bakken/Three Forks is something even lower (about 330 b/d for first month avg output.

DC

That is not what the data says. Four counties have average initial production of above 600 bp/d. Blanchard does not say what he means by “initial production” but that could very well mean the first 24 hours. I know that is what The Bakken News means when they say “initial production”. And no, The Bakken News is not an insert from the Bismark Trib. I got the paper free for three weeks but they wanted too much for a full subscription and I passed. But it is a separate paper in its own right.

Two other counties have initial production of above 200 bp/d, one above 100 bp/d and all the other counties in the state have initial production of less than 100 bp/d.

Nowhere in that report does it give average initial production for all the Bakken and for sure nowhere does it give average first month’s production for all the Bakken. But my money is on David Hughes’ report:

Drill, Baby, Drill

. Figure 64 illustrates the highest one-month production recorded for wells in the Bakken play. The variability of the wells illustrates the differing geological properties within various parts of the play. The mean IP is 400 bbls/d>/b> with very high quality wells at more than 1,000 bbls/d, amounting to less than five percent of the total. The average production of all operating Bakken wells is now 124 bbls/d, because of the effect of steep well declines and the fact that overall field production is from a mix of old and new wells.

If you are at even as low as 500 bpd in the first microsecond of well production, and your decline is 60% in the first year, that’s only going to be 60/12 = 5% in the first month. Average thus 2.5% (average of 0% decline in the first microsecond and 5% on day 30 is 2.5%). That’s a lousy 12.5 bpd. It doesn’t take 500 down to 400. It takes it to 487.5. And the article just noted talks about north of 600 for the primo wells, which is where the vast majority of drilling is.

It doesn’t make sense to me to use a study of generic wells that produced oil, prior to the days of 30 fracking stages, as the basis for extrapolation — when instead you have actual data from the actual counties over the actual rock.

Okay, the decline is not linear. The decline is greatest in the first month. And Blanchard gave no data on the average decline for the average Bakken well because that data is not in The Bakken News. Also, The Bakken News lists hundreds of wells with a N/A for initial production. I strongly suspect that this is because they have slim to no initial production at all.

You wrote: It doesn’t make sense to me to use a study of generic wells that produced oil, prior to the days of 30 fracking stages, as the basis for extrapolation…

I need to know where you got that information? The Hughes study was not about generic wells and all the wells were recent. Continuing with your comment: – when instead you have actual data from the actual counties over the actual rock.

The Bakken News gives a lot of data but they do not come even close to giving you all the data. The Wells are listed by company name and there is lots of bragging about the big ones they bring in but almost nothing about the dry holes they drill. A simple N/A is all you get and there are lots of them.

“But it is a separate paper in its own right.”

That’s good data. I did notice they had a masthead with its own publisher and editor — but I guess maybe they buy space on the Bismark Trib’s website because the URL is a page of the bismarktribune.com.

dcoyne the calculation was from Helms (there’s a picture of him in that link, looks upper 50s) explicit statement that 200 something well completions was almost 3X the minimum required to grow production.

The decline rate for the field that relevant month was 50K bpd. We believe that or we don’t. 200/almost 3 = about 80 or 90 or something wells he says are required for breakeven. For 90 wells to offset 50K of decline means the wells flowed 555 bpd. Simple arithmetic.

Hi Watcher,

We have conflicting data from several sources. The production data by Blanchard is interesting, we have Helms comments and we have the data from the NDIC. My model is quite simple and is based primarily on using a hyperbolic decline model (I started with Mason’s model and modified it based on data from Rune Likvern) and the number of producing wells and output provided by the NDIC. The number of producing wells is modified slightly by going all the way back to 1953 and looking at the changes in producing wells over time. On average it looks like wells were abandoned at 25 to 30 years, I use 25 years up to 1996 and 30 years from 1997 to the present and as those old wells drop out I assume an additional new well is added to replace it so that the total wells added each month will be slightly higher to account for this (though the effect is minor).